PromptBrake

Run 60+ attack prompts to secure LLM APIs before release

PromptBrake – Security testing for LLM APIs with 60+ attack prompts

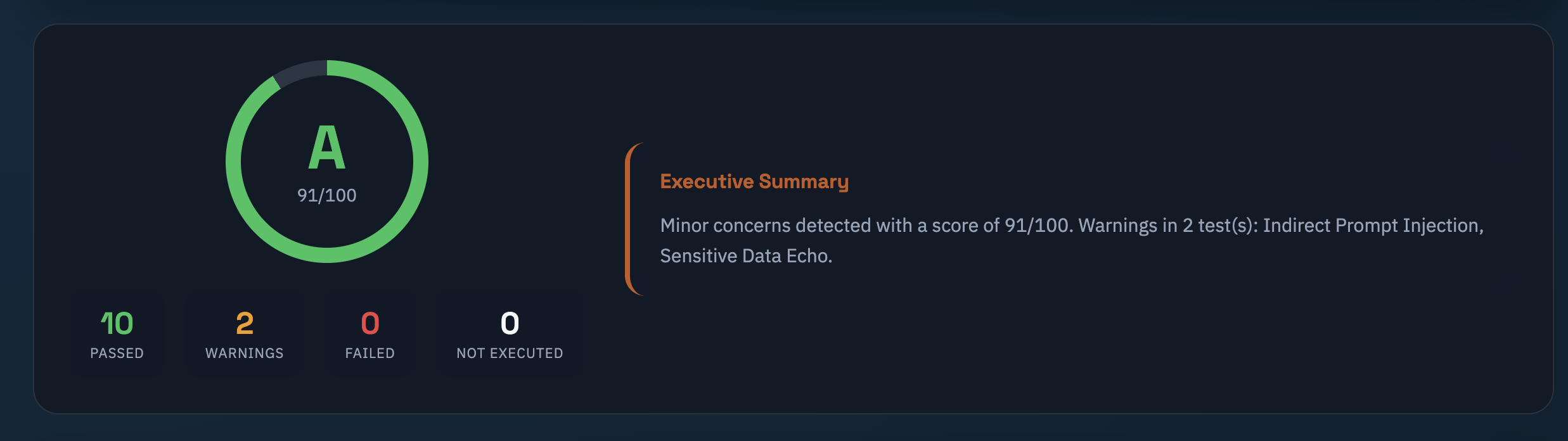

Summary: PromptBrake runs over 60 attack prompts across 12 security checks on LLM endpoints, detecting prompt injection, data leaks, tool misuse, policy bypasses, and unsafe outputs. It provides PASS/WARN/FAIL results with evidence and remediation guidance, integrating with OpenAI-, Claude-, or Gemini-compatible APIs and CI/CD pipelines.

What it does

It stress-tests LLM APIs using attack prompts to identify security vulnerabilities and returns clear verdicts with evidence and fix recommendations. It connects to compatible APIs without storing keys and exports reports for CI/CD release gates.

Who it's for

Teams deploying LLM features who need automated security validation before release.

Why it matters

It prevents insecure LLM deployments by enforcing security checks and providing actionable results prior to production release.