OpenAI WebSocket Mode for Responses API

Persistent AI agents. Up to 40% faster.

OpenAI WebSocket Mode for Responses API – Persistent connections reducing latency by up to 40%

Summary: WebSocket Mode for the Responses API maintains a persistent connection that sends only incremental inputs instead of full context each turn, reducing end-to-end latency by up to 40% in workflows with heavy tool calls. This approach optimizes multi-turn agent interactions by keeping session state in memory and avoiding repeated context resending.

What it does

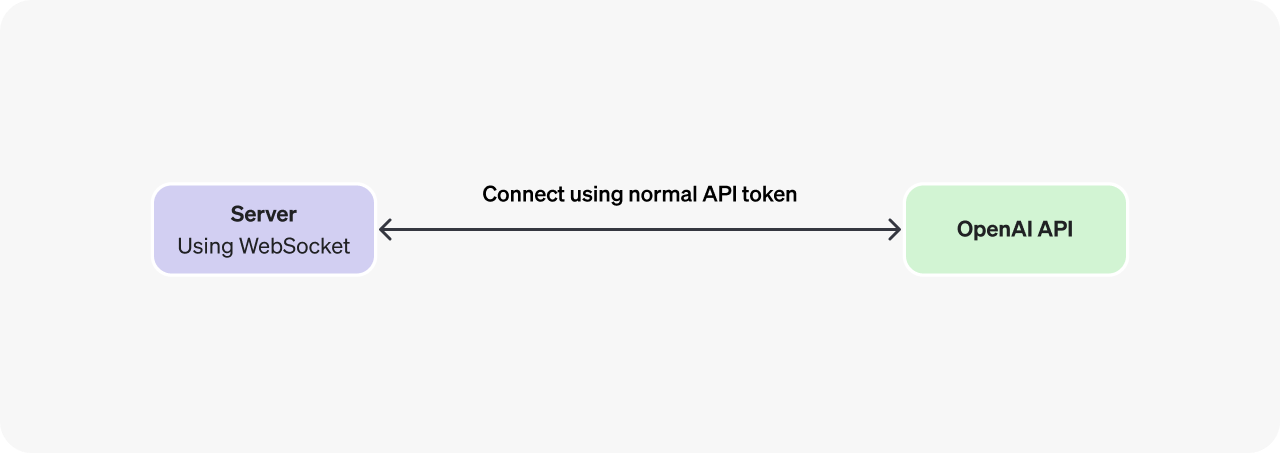

It replaces repeated HTTP handshakes with a single persistent WebSocket connection to /v1/responses, transmitting only incremental inputs while preserving session state in memory, enabling faster multi-turn agent workflows.

Who it's for

Teams running agentic coding tools, browser automation loops, or orchestration systems where latency impacts user experience and repeated tool calls occur.

Why it matters

It reduces latency and infrastructure overhead caused by resending full conversation history each turn, improving performance on complex, multi-step tasks.