UNC

HuggingFace transformer compiler for optimised inferences

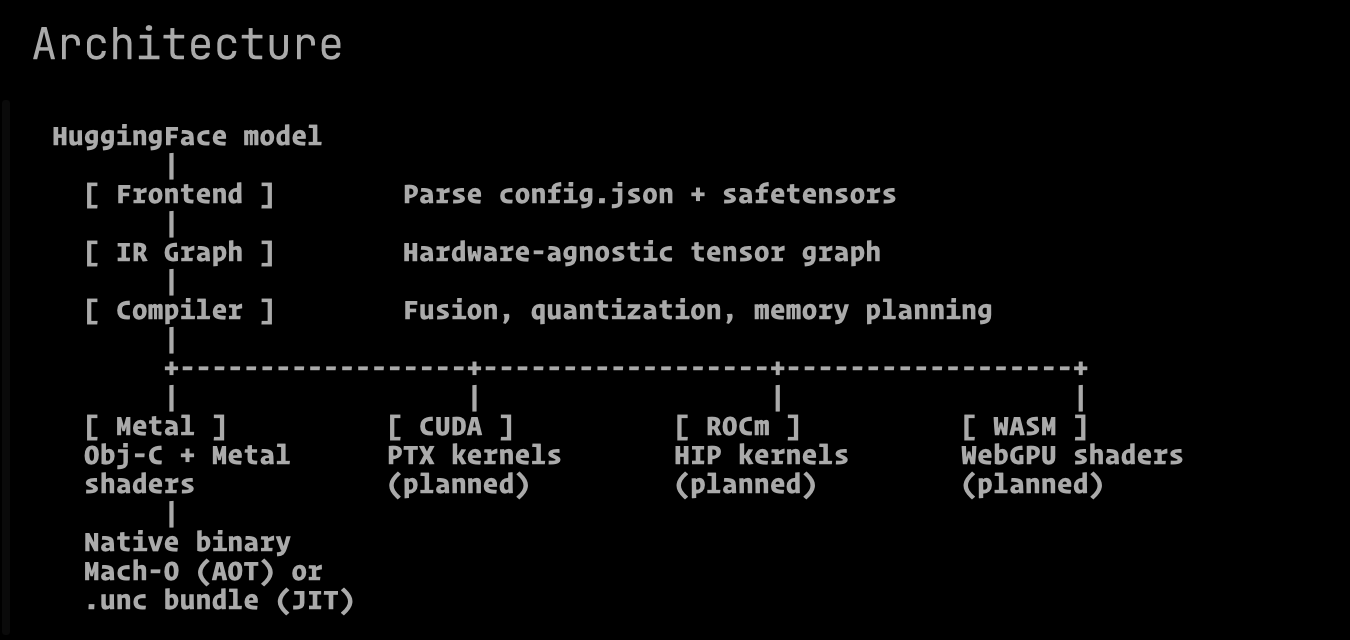

UNC – HuggingFace transformer compiler for optimized Apple Silicon inference

Summary: UNC compiles HuggingFace transformer models into native Metal binaries for Apple Silicon, delivering faster inference with 25% less GPU power and 1.7x better energy efficiency than mlx-lm. It eliminates runtime frameworks and Python, producing compact binaries that reduce CPU instructions and power consumption.

What it does

UNC uses JIT/AOT compilation to convert HuggingFace models into optimized native binaries that run efficiently on Apple Silicon without additional runtime dependencies.

Who it's for

Developers and users needing efficient, low-power transformer model inference on Apple Silicon devices.

Why it matters

It reduces GPU power and CPU instructions, enabling faster, more energy-efficient on-device inference with less heat and resource use.