Sovereign-Lila-E8

Scaling is dead. Geometry is the new Scale.

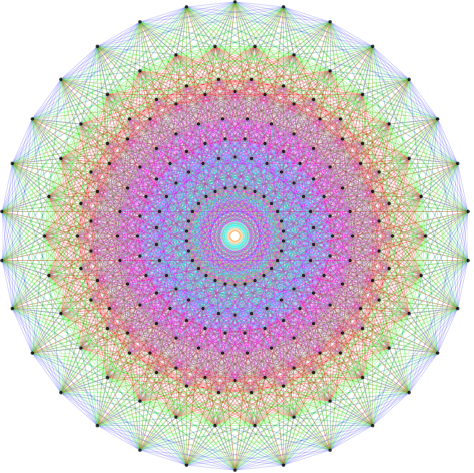

Sovereign-Lila-E8 – Transformer using E8 Root System for geometric scaling

Summary: Sovereign-Lila-E8 is a transformer architecture that replaces standard attention with an E8 Root System lattice, scaling by increasing manifold packing density rather than parameters. It achieves state-of-the-art performance with 40 million parameters and stable outputs beyond 1000 tokens without semantic loops.

What it does

It implements the E8 exceptional Lie algebra directly into attention weights, enabling geometric scaling and improved model efficiency compared to traditional transformers.

Who it's for

Researchers and developers seeking efficient transformer models with advanced geometric attention mechanisms.

Why it matters

It addresses scaling limitations of standard transformers by using geometric resonance to improve performance without increasing parameter count.