SentinelText

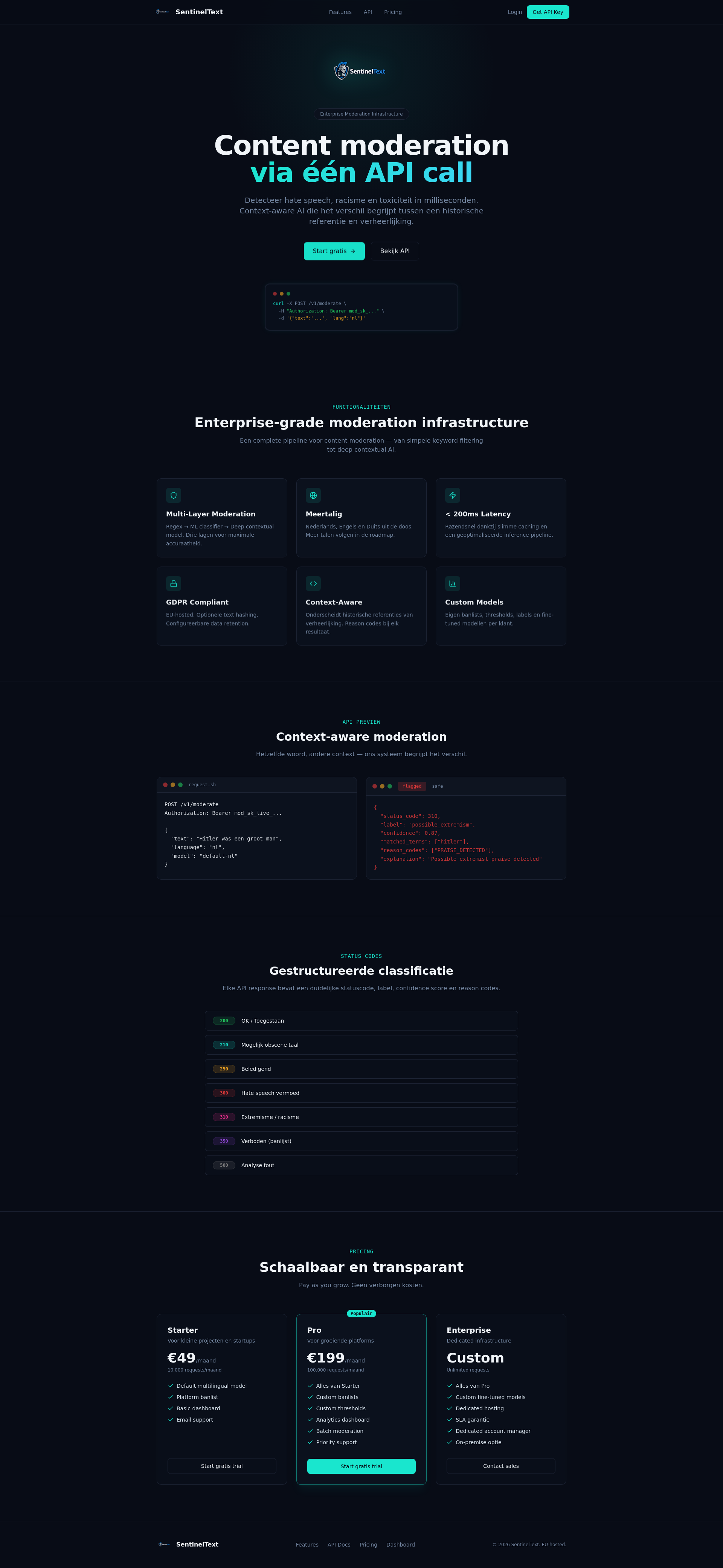

SentinelText – Context-aware AI moderation API

SentinelText – Context-aware AI moderation API

Summary: SentinelText is an AI-powered moderation API that detects toxic, abusive, and hateful content in real time using multi-model analysis. It offers flexible model selection per request and easy integration for developers building safer apps and chat platforms.

What it does

SentinelText analyzes user-generated text to identify harmful language, negative stereotypes, and hidden profanity, including obfuscated words. It provides a playground for testing and API key generation before integration.

Who it's for

Developers of modern applications, chat platforms, and AI tools seeking automated, context-aware content moderation.

Why it matters

It addresses the challenge of detecting subtle harmful content that many existing solutions miss or handle inefficiently.