QuarterBit AXIOM

Train 70B AI models on 1 GPU instead of 11

#Developer Tools

#Artificial Intelligence

#Tech

QuarterBit AXIOM – Train 70B AI models on a single GPU

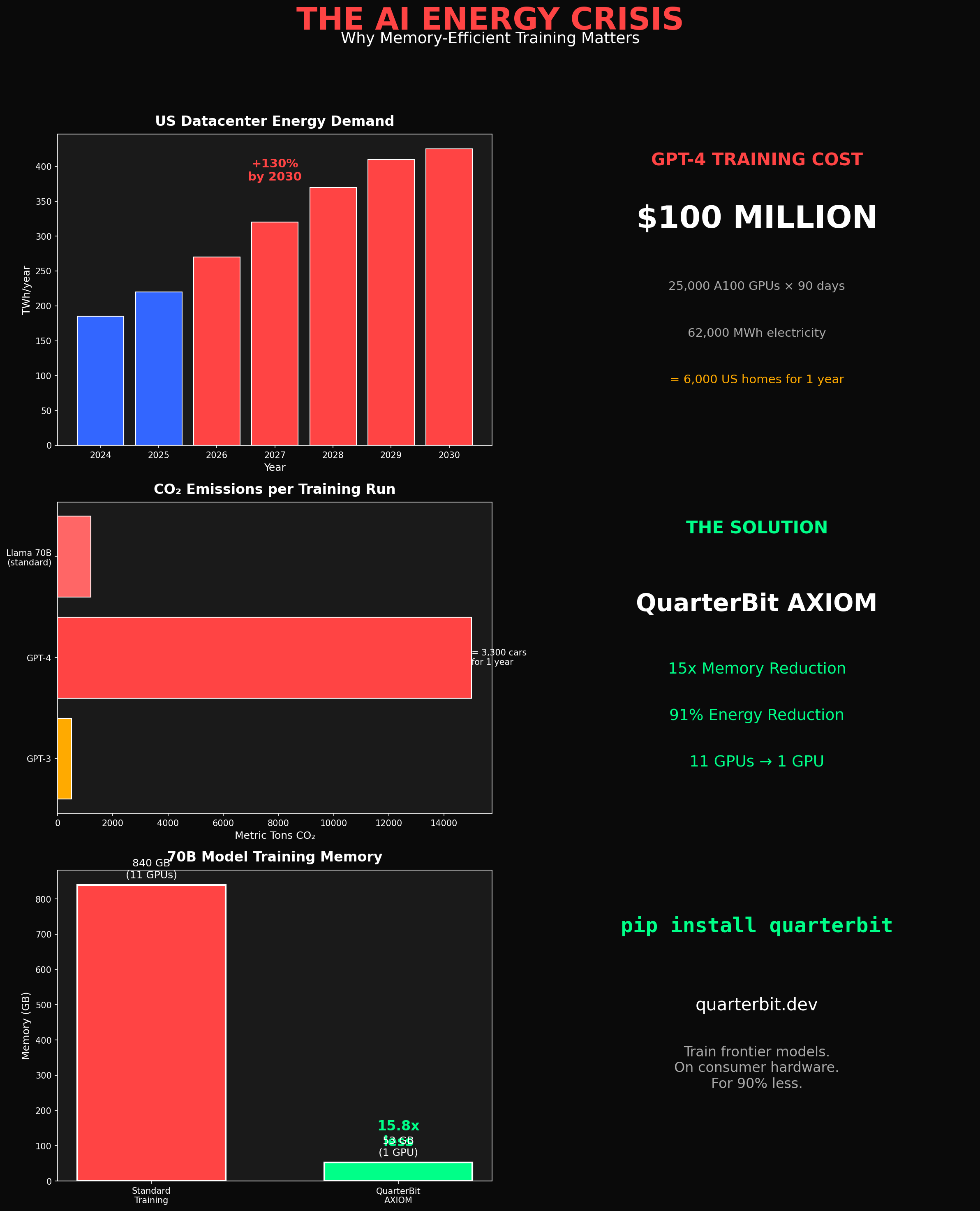

Summary: QuarterBit AXIOM compresses AI training memory 15x, enabling 70 billion parameter models to be trained on one GPU instead of eleven. It supports full fine-tuning with all parameters trainable, reducing costs and energy consumption significantly.

What it does

AXIOM reduces the GPU memory needed for large AI models, allowing full fine-tuning of 70B parameter models on a single GPU by compressing training memory 15 times.

Who it's for

Developers and researchers who need to train large AI models but lack access to extensive GPU resources.

Why it matters

It lowers the financial and energy barriers to training large AI models, making advanced AI development more accessible.