PromptBench

Version, test & compare your AI prompts across models

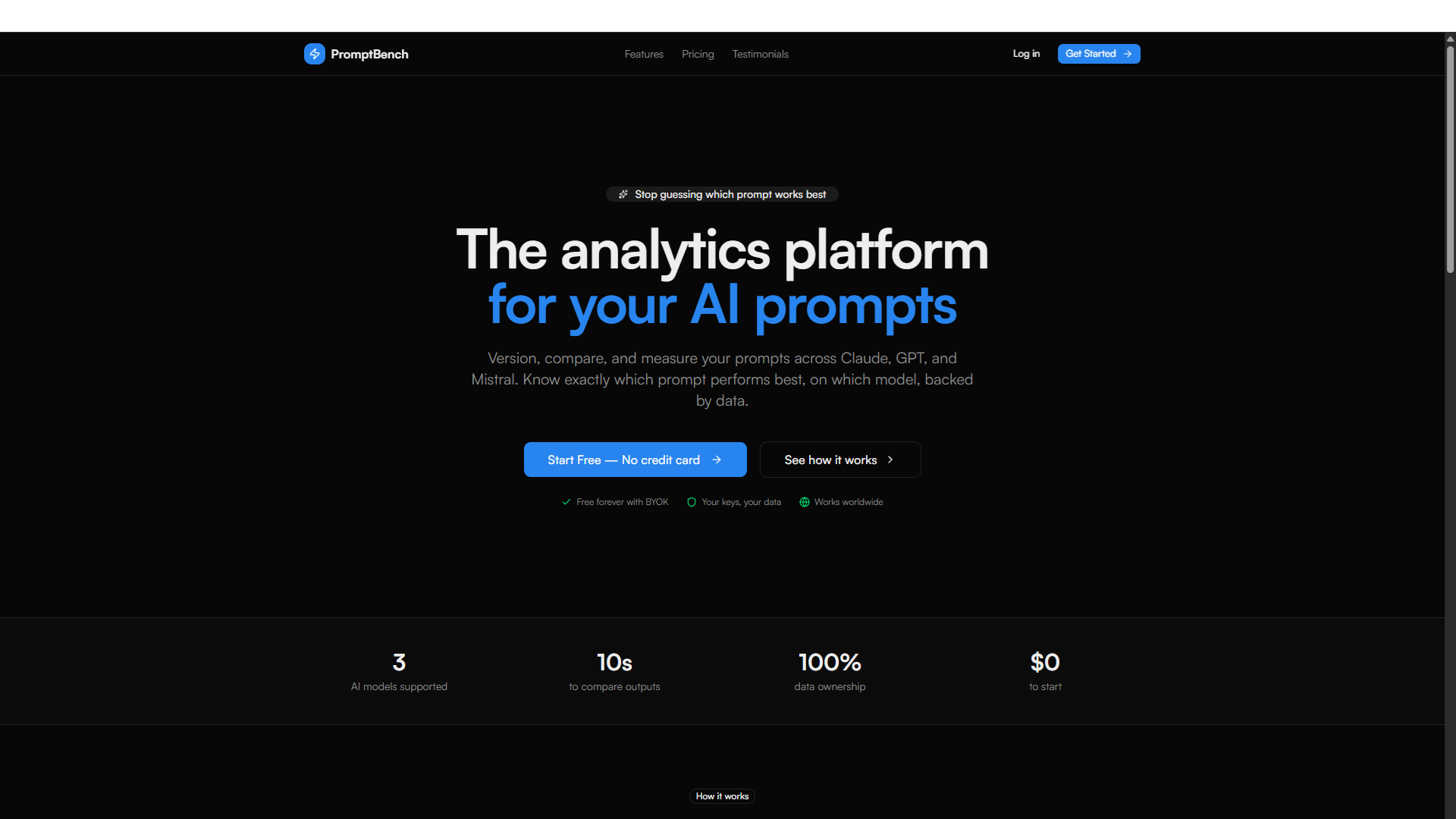

PromptBench – Version, test & compare your AI prompts across models

Summary: PromptBench enables users to run identical prompts on up to 10 AI models including Claude, GPT-5, o3, and Mistral, score outputs from 1 to 10, and track prompt performance over time using analytics. It supports prompt versioning and offers chat and completion modes for iterative prompt engineering.

What it does

It provides a multi-model playground to test and compare prompts side-by-side, with scoring and an analytics dashboard to monitor results. Users can manage prompt versions and switch between chat and complete modes.

Who it's for

Ideal for AI developers and prompt engineers who need to evaluate and optimize prompts across multiple language models.

Why it matters

It addresses the challenge of tracking and improving prompt effectiveness by enabling systematic testing and version control across different AI models.