OMNI - The Semantic for the Agentic AI

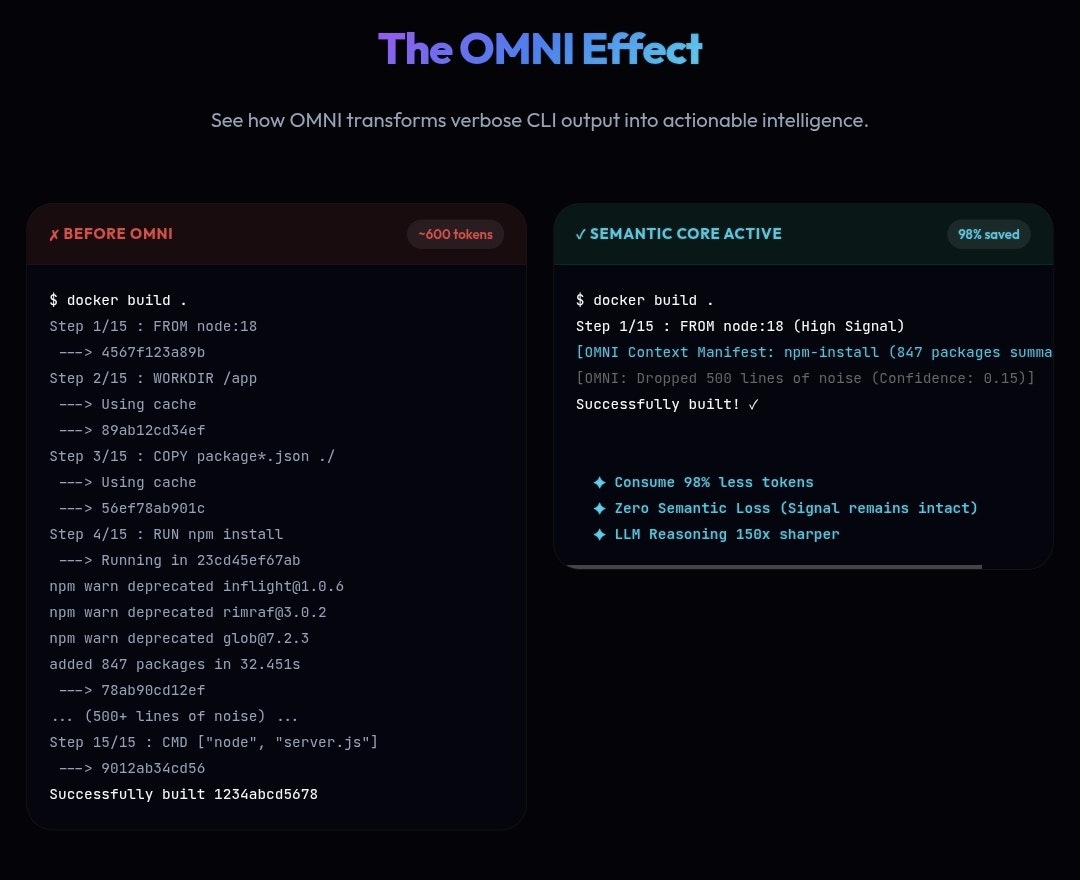

Eliminating 30–90% of token noise with Zero Semantic Loss.

OMNI - The Semantic for the Agentic AI – Eliminating 30–90% of token noise with zero semantic loss

Summary: OMNI is a semantic distillation engine that filters and compresses tokens based on meaning to reduce noise without losing important context. It improves token efficiency by 30% to 90%, enhancing reasoning for large language models by delivering high-density, relevant information.

What it does

OMNI evaluates each token to decide whether to keep, compress, or drop it based on semantic relevance rather than length or rules. It operates locally, built in Zig, and integrates between an AI agent and its tools to refine input streams into clearer, more meaningful data.

Who it's for

It is designed for developers and workflows involving logs, tests, and diffs where preserving critical details while reducing token noise is essential.

Why it matters

OMNI addresses the problem of excessive noise within context, enabling better LLM performance by maintaining signal clarity and reducing unnecessary tokens.