Ollama Explorer

Find the right local AI model for your hardware instantly

Ollama Explorer – Instantly find the right local AI model for your hardware

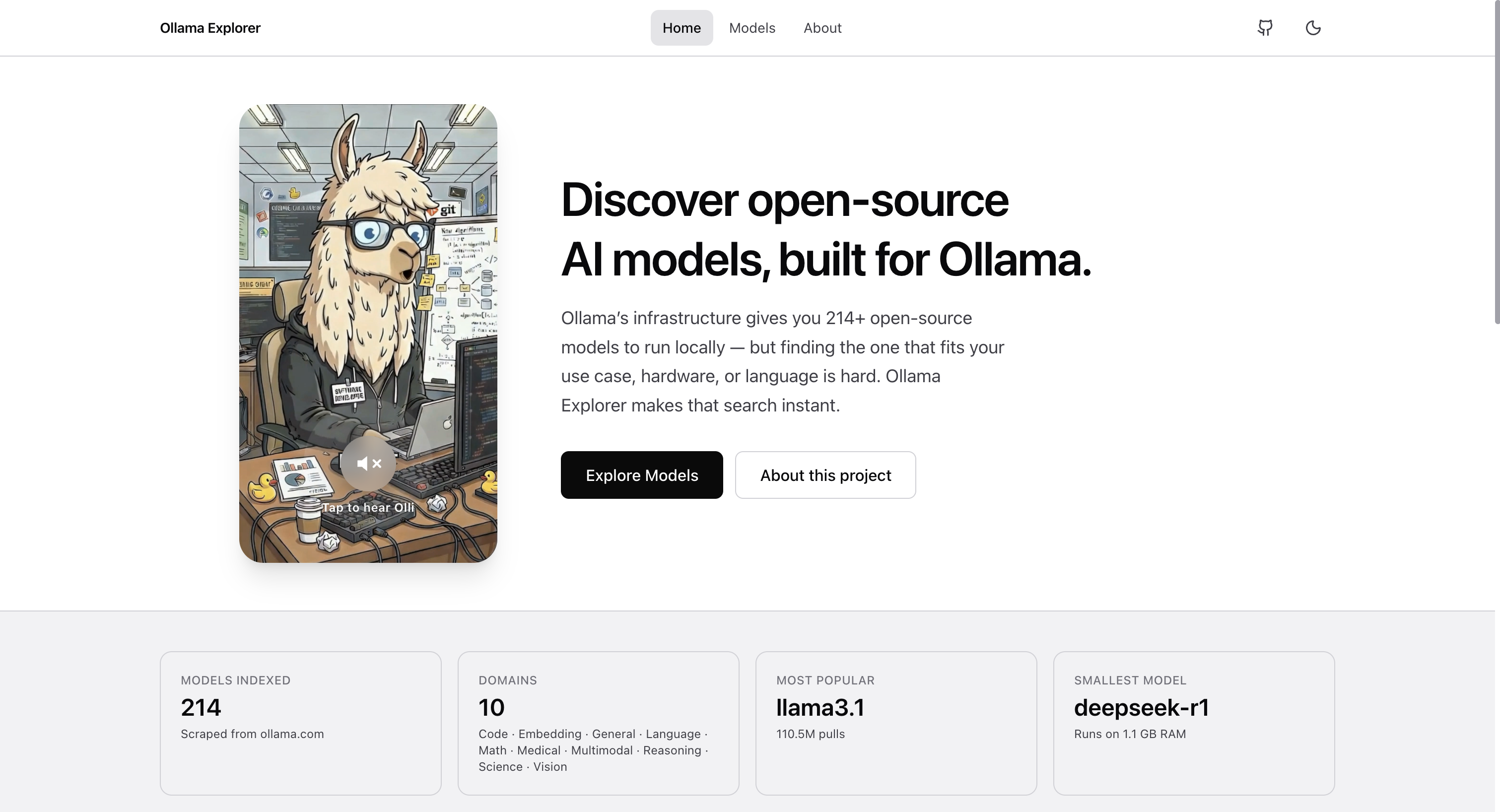

Summary: Ollama Explorer is an open-source tool that helps users quickly find suitable AI models from over 200 options by filtering based on use case, hardware requirements, and model specifications. It provides detailed information on RAM needs, parameter size, and recommended tasks without requiring signup or showing ads.

What it does

It enables filtering of AI models by use case, domain, RAM requirement, parameter size, and context length, displaying hardware needs and pull counts to help users select compatible models for their machines.

Who it's for

Users seeking to identify which Ollama AI models will run efficiently on their hardware and match their specific tasks.

Why it matters

It solves the difficulty of choosing appropriate local AI models by providing a fast, filterable interface that matches models to hardware capabilities and user needs.