Nimbus

Local proxy that gives you ~$1,000/mo of free AI compute

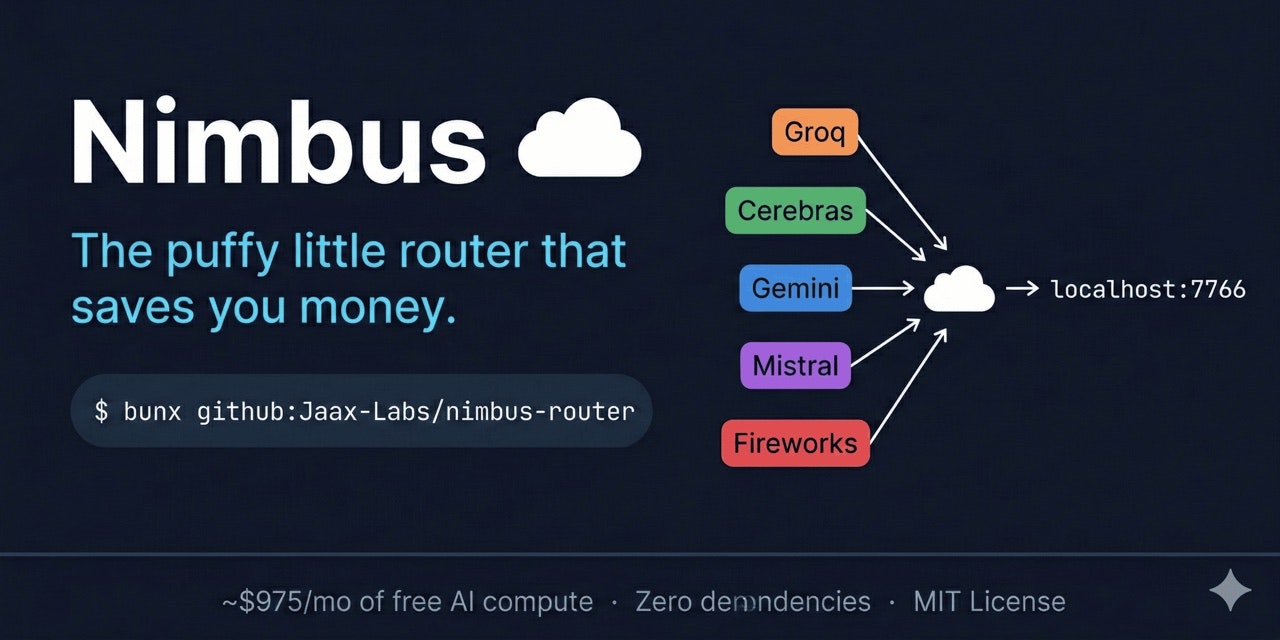

Nimbus – Local proxy providing free AI compute from multiple LLM providers

Summary: Nimbus is a zero-dependency local proxy that routes AI requests to free API tiers from nine providers, offering a unified OpenAI-compatible endpoint on localhost. It supports coding, chatting, and running evaluations across many models simultaneously, aggregating up to ~$1,000/month in free compute.

What it does

Nimbus aggregates free API tiers from providers like Cerebras, Gemini, Groq, and others, exposing a single endpoint at localhost:7766 compatible with any software accepting an API key. It uses concurrent speculative execution, auto-quarantine for dead keys, and context estimation to optimize requests.

Who it's for

Developers and users needing to run code, chat, or evaluate multiple large language models simultaneously through a unified local API.

Why it matters

It consolidates free AI compute resources into one local proxy, enabling extensive multi-model access without incurring costs or managing multiple APIs.