NeoSmith AI

Custom SLM for AI Agents: 40–55% cheaper, 3–5x faster

NeoSmith AI – Custom Small Language Models for Faster, Cheaper AI Agents

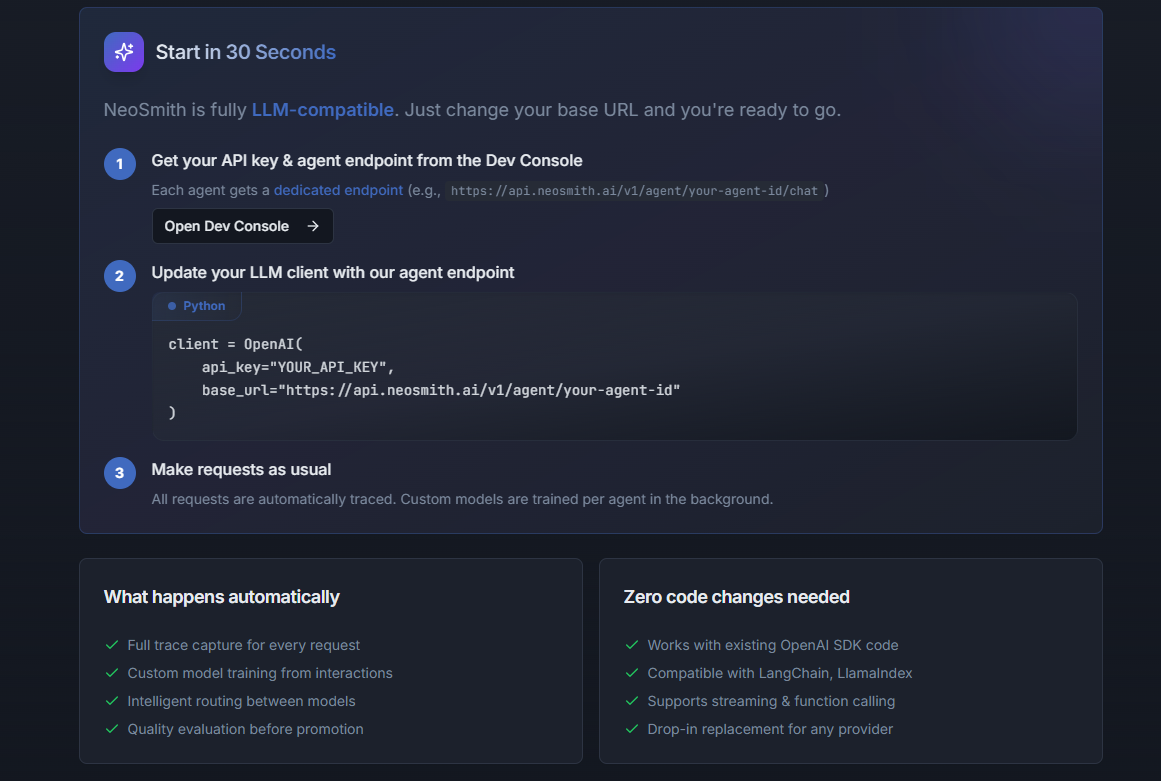

Summary: NeoSmith AI trains custom Small Language Models (SLMs) from your LLM interaction logs to reduce inference costs by 40–55% and increase speed by 3–5x. These SLMs handle 80–90% of agent tasks with improved accuracy by focusing on your specific workload, requiring only a single endpoint swap and no MLOps.

What it does

It distills a custom SLM from your LLM logs without labeling or fine-tuning infrastructure, running all training on NeoSmith’s side. The resulting model is domain-specific, faster, and cheaper than general-purpose LLMs.

Who it's for

Engineering teams facing high LLM costs in production and repetitive AI agent tasks benefit from NeoSmith’s tailored SLM approach.

Why it matters

It addresses the cost and latency issues of using large general-purpose models for specific, repetitive workloads by providing a more efficient, accurate alternative.