Nemotron 3 Super

Open hybrid Mamba-Transformer MoE for agentic reasoning

Nemotron 3 Super – Open hybrid Mamba-Transformer MoE for agentic reasoning

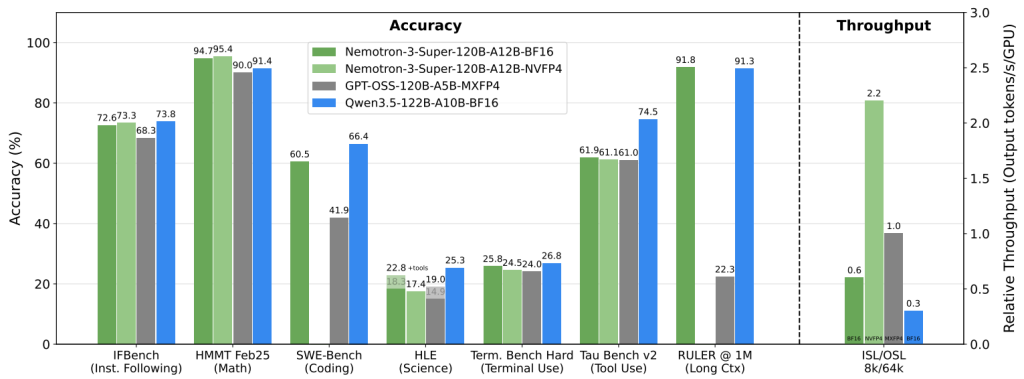

Summary: Nemotron 3 Super is NVIDIA’s open 120B-parameter model featuring 12B active parameters, a 1M-token context window, and a hybrid Mamba-Transformer MoE architecture designed for coding, long-context reasoning, and multi-agent tasks. It addresses the computational overhead of large reasoning models and context explosion in extended tool interactions.

What it does

It uses a hybrid Mamba-Transformer LatentMoE design with multi-token prediction to enable efficient long-context processing and faster generation for complex workloads.

Who it's for

Developers and researchers working on coding, long-context reasoning, and multi-agent systems requiring scalable, open large language models.

Why it matters

It reduces the "thinking tax" and context drift in large reasoning models, making extended multi-agent workflows more practical.