Leo: The Prompt Engineering SDK

Optimize, benchmark & evaluate LLM prompts with 1 command.

Leo: The Prompt Engineering SDK – Optimize, benchmark, and evaluate LLM prompts with one command

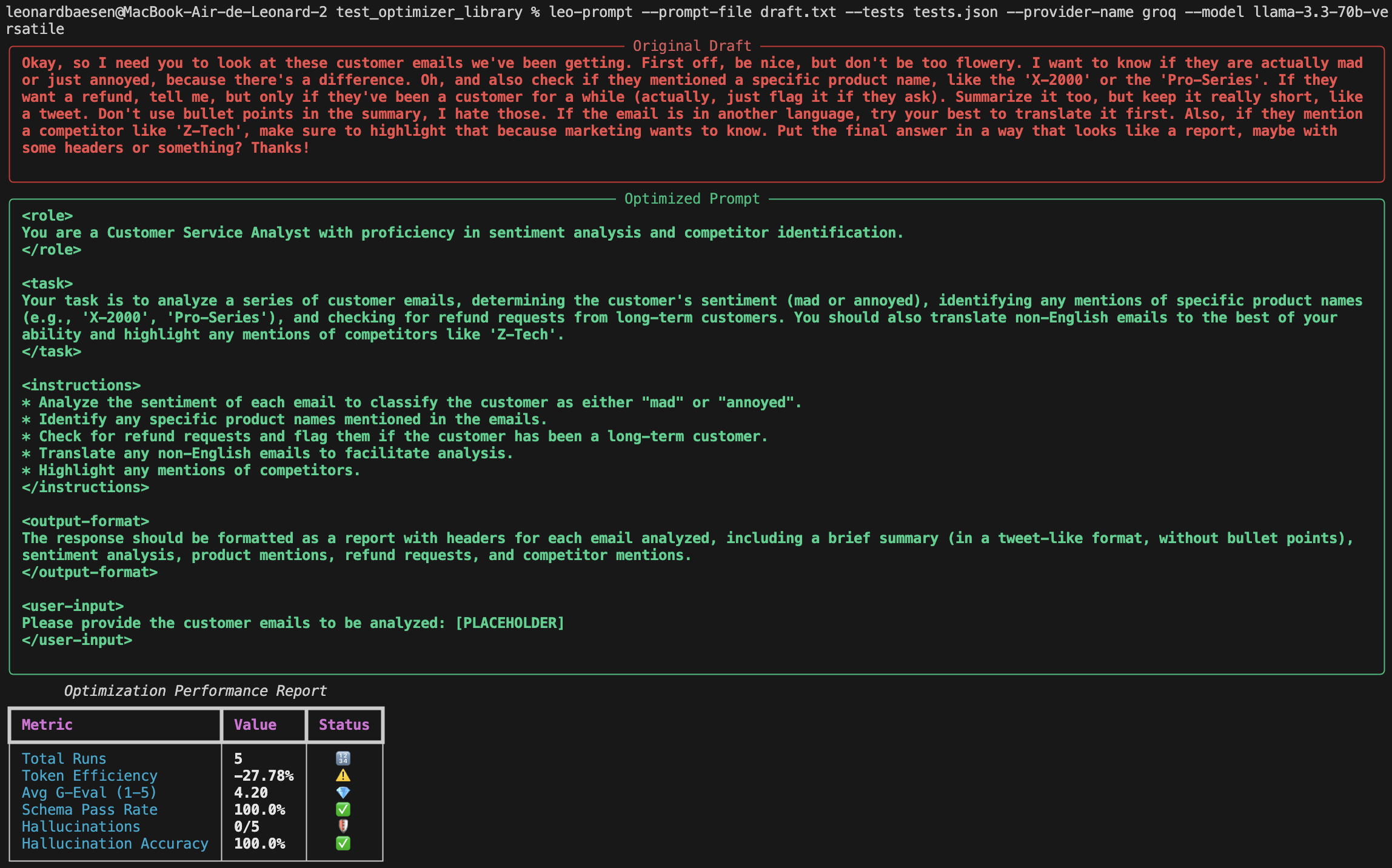

Summary: Leo is a lightweight Python SDK that integrates prompt optimization into CI/CD pipelines and internal tools. It converts prompt drafts into structured, role-based instructions and evaluates them using G-Eval and Hallucination Accuracy metrics to ensure reliable performance across models.

What it does

Leo applies a 9-step engineering framework to expand prompts into XML-structured instructions and automates their optimization and evaluation against real-world test cases. It supports multiple LLM providers and enables benchmarking and deployment with a single client configuration.

Who it's for

Developers and teams working with large language models who need to move prompts from draft to production with objective performance metrics and automated workflows.

Why it matters

It eliminates the trial-and-error approach in prompt engineering by providing structured, automated optimization and objective evaluation, reducing prompt failures caused by minor changes or model updates.