Code Canary

Realtime Reporting of Coding Agent Performance

Code Canary – Realtime Reporting of Coding Agent Performance

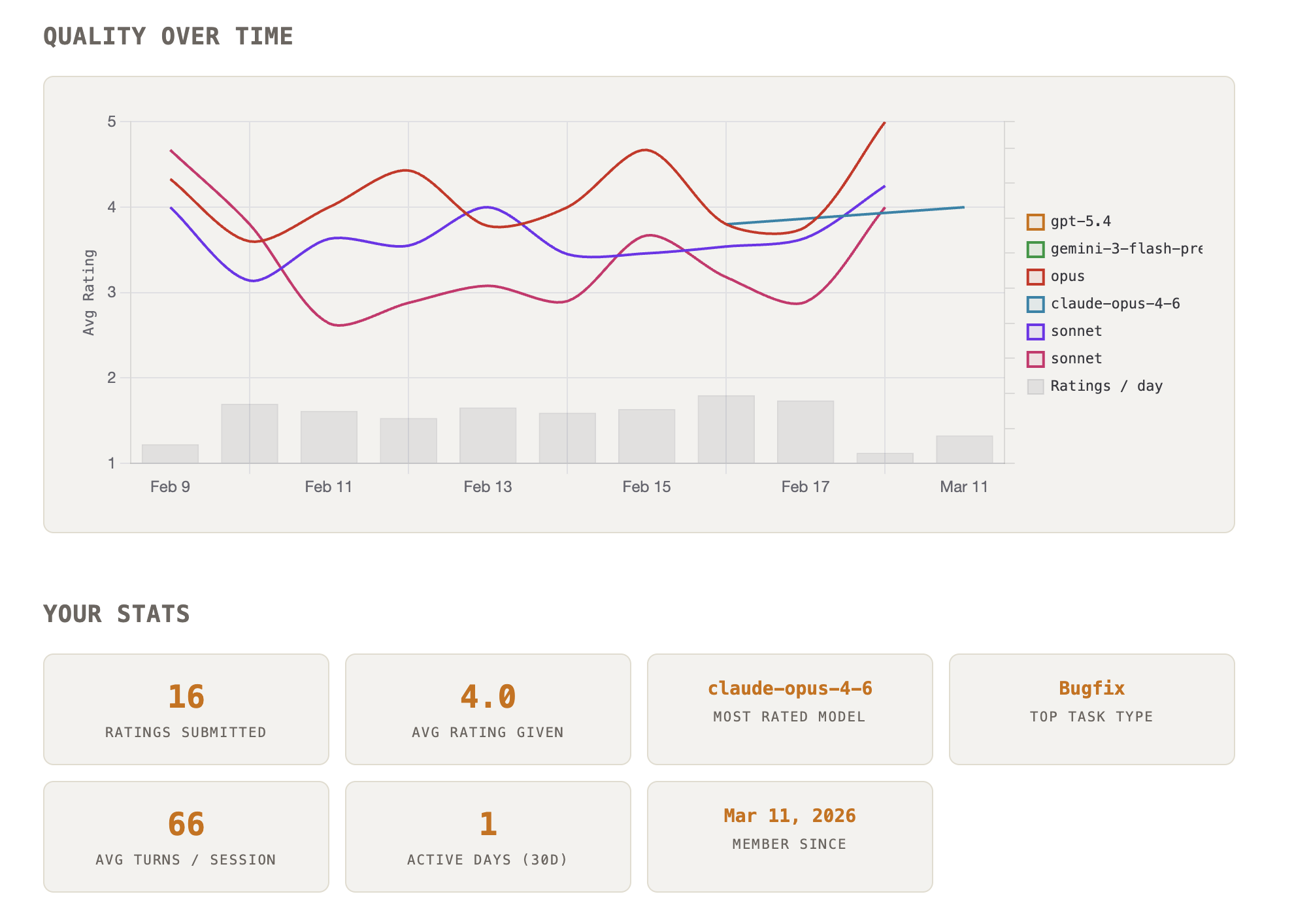

Summary: Code Canary collects realtime user feedback on AI coding agents like Claude Code, Codex, and Gemini CLI to monitor their performance quality. It aggregates ratings from developers and displays continuous, public comparisons to identify service issues or model inconsistencies.

What it does

Code Canary gathers distributed data by letting developers rate their AI coding sessions and publishes the results on a live comparison dashboard. This system tracks fluctuations in coding agent quality beyond traditional benchmarks.

Who it's for

It is designed for developers and engineers who use AI coding agents and need insight into their real-time reliability and performance.

Why it matters

It addresses the challenge of distinguishing between user error, codebase issues, and actual AI model or service problems by providing transparent, realtime quality data.