Certifai

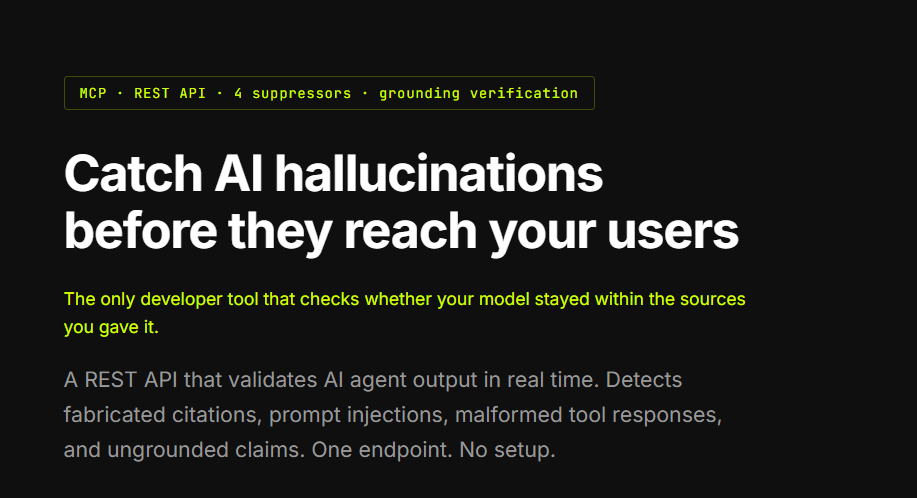

Catch AI hallucinations before they reach your users

Certifai – Detect structural AI hallucinations before deployment

Summary: Certifai is an API suite that detects structural AI hallucinations such as invented JSON fields, mismatched tool outputs, and prompt injections in retrieved content. It combines grounding checks, injection detection, JSON schema validation, and tool-response verification into a single call, preventing pipeline failures before outputs reach users.

What it does

Certifai runs four suppressors in one API call to validate AI agent outputs by enforcing grounding, checking for prompt injections, verifying JSON schemas, and confirming tool-response integrity. It is available as a REST API and MCP server with a free tier of 500 requests per month.

Who it's for

It is designed for developers building AI agents that rely on tools, retrieval, or structured outputs needing robust error detection before user delivery.

Why it matters

It prevents downstream failures caused by structural hallucinations that traditional truth-checking tools often miss, ensuring more reliable AI agent pipelines.