BASTYN

Build AI products your users can trust

BASTYN – Continuous AI behaviour validation for safer deployments

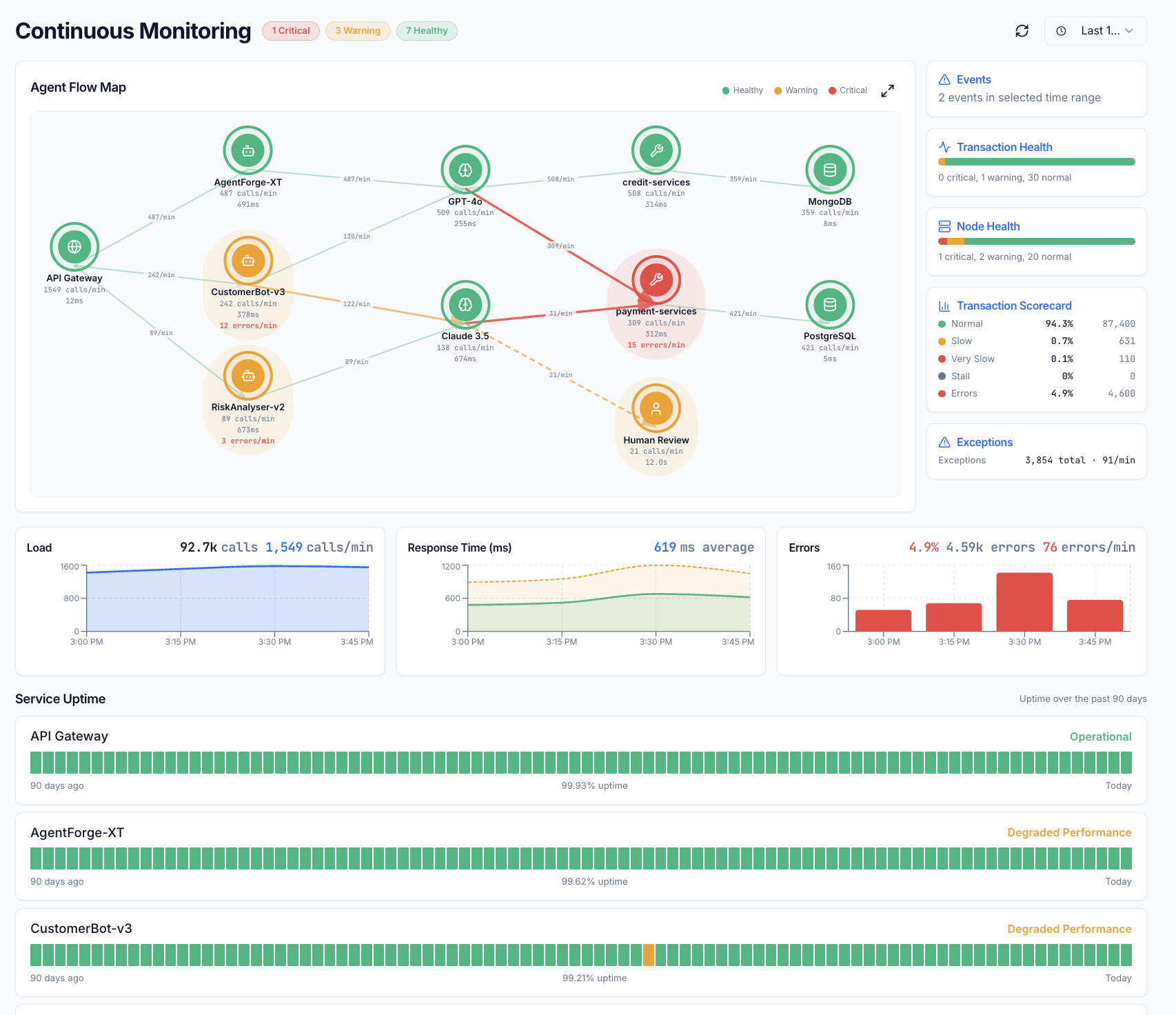

Summary: BASTYN continuously monitors AI agents post-deployment using 717 adversarial probes across 23 test vectors to detect hallucination, bias, prompt injections, and data exfiltration. It integrates major AI safety standards and provides risk grading with compliance evidence, enabling real-time verification and revocation of AI behaviour drift.

What it does

BASTYN validates AI agent behaviour both before launch via CI/CD integration and continuously after deployment, detecting vulnerabilities and evolving threats using adversarial testing and a risk grading algorithm based on functional context, jurisdiction, and domain.

Who it's for

It is designed for teams building, deploying, or embedding AI agents who require ongoing safety verification and compliance assurance against emerging risks.

Why it matters

BASTYN addresses silent AI failures and evolving attack surfaces by providing autonomous, always-on monitoring and instant, verifiable trust signals to prevent undetected behaviour drift after shipping.